|

Part of the attractiveness of the method of moments was probably that it gave maximum likelihood estimators for some interesting models (exponential family models) but was computationally tractable in some cases where the maximum likelihood estimator wasn't. More generally, maximum likelihood estimators and other M-estimators use means of summaries that (in general) aren't just powers of the data. These aren't fatal objections, but they do make the maths harder. Also, the functions involved aren't smooth. Alternatively, if you define it by solving an equation (sum of +1 for positive residuals and -1 for negative residuals) there isn't always an exact solution to the equation. If you define a quantile by a minimisation (minimise sum of absolute values of residuals for the median) there isn't always a unique solution. There are technical annoyances about quantiles that make them less popular.

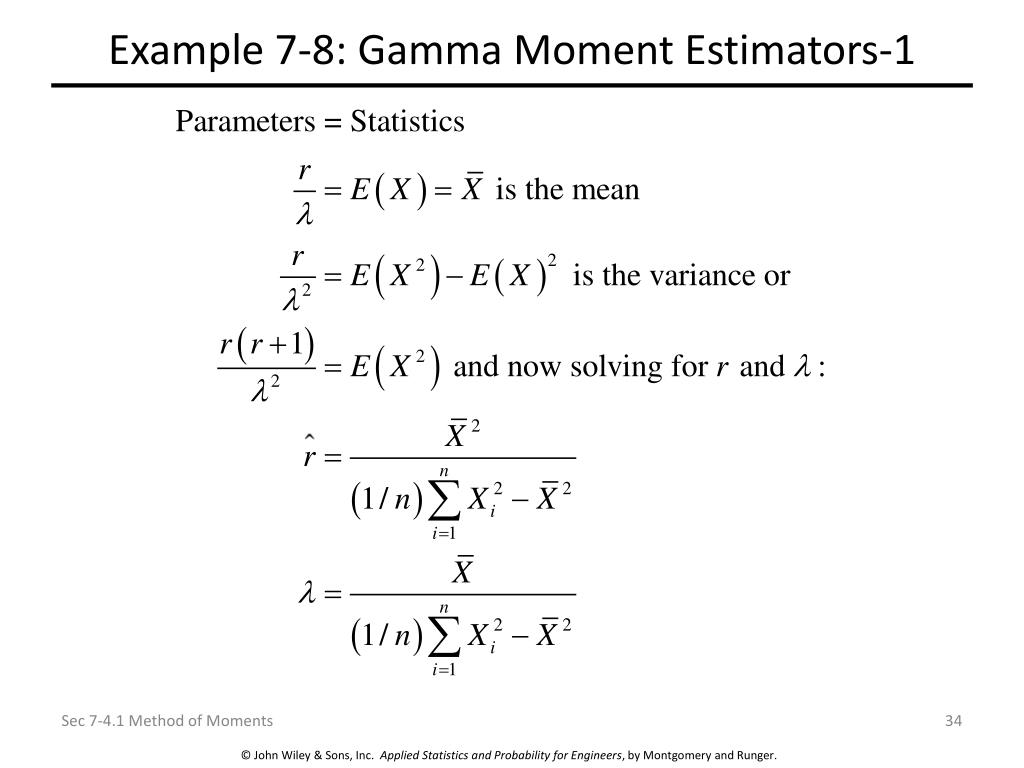

Similarly, you can estimate a scale parameter using differences in quantiles (eg, interquartile range). Sometimes the mean will be more efficient (eg, Normal), sometimes the median will be more efficient (eg, Laplace). For example, you can estimate the parameter in any location family using the median instead of the mean. In a $p$-dimensional parametric family you can set $p$ observed quantiles equal to their expected values and (for most choices of the quantiles) get consistent estimators. 12.1 Method of moments If is a single number, then a simple idea to estimate is to nd the value of for whichthe theoretical mean ofX f(xj ) equals the observed sample meanX 1+: (X1: :+Xn).

Yes, you can have a 'method of quantiles'.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed